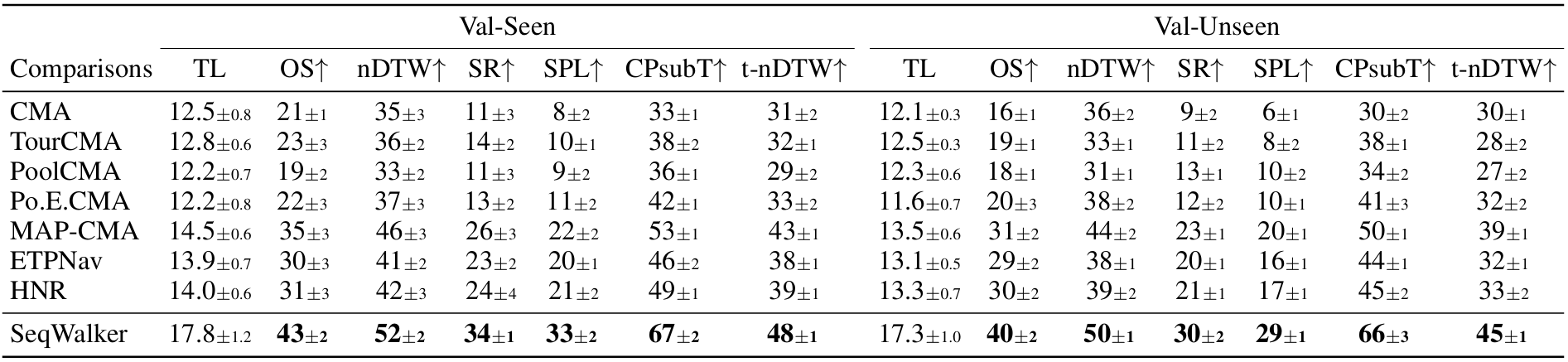

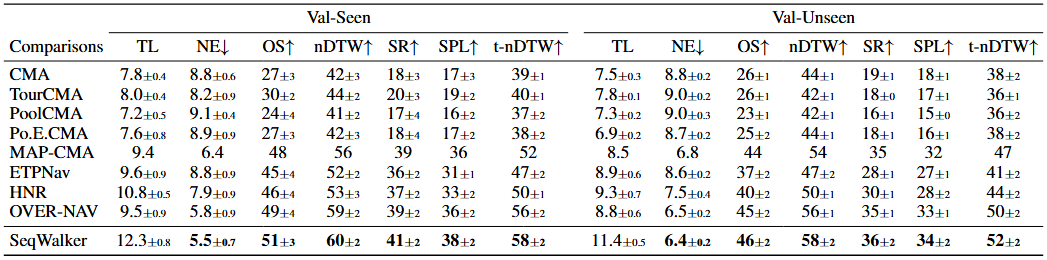

Sequential-Horizon Vision-and-Language Navigation (SH-VLN) presents a challenging scenario where agents should sequentially execute multi-task trajectory navigation guided by complex, long-horizon natural language instructions. Current vision-and-language navigation models exhibit significant performance degradation with such instructions, as information overload impairs the agent's ability to attend to observationally relevant details. To address this problem, we propose SeqWalker, a novel navigation model built on a hierarchical planning framework. Our SeqWalker features: (1) A High-Level Planner that dynamically selects global instructions into contextually relevant sub-instructions based on the agent's current visual observations, thus reducing cognitive load; (2) A Low-Level Planner incorporating an Exploration-Verification strategy that leverages the inherent logical structure of instructions for trajectory error correction. To evaluate SH-VLN performance, we also extend the IVLN dataset and establish a new benchmark. Extensive experiments are performed to demonstrate the effectiveness and superiority of SeqWalker.

(a)Statistics of the transformed datasets.

(b)Navigation Word Cloud.